AI that puts safety first,

not engagement

Students are already turning to AI for emotional support. Alongside is the only evidence-based, clinician-powered platform built specifically for school-age youth and governed by the S.U.R.E. Framework.

The S.U.R.E. Framework

Every interaction on Alongside is evaluated against four non-negotiable dimensions.

Safe

The first and non-negotiable dimension. Every interaction is evaluated for active harm protection before anything else is considered.

- Real-time crisis detection & escalation

- Immediate notification to school counselors

- Links to 988 Suicide & Crisis Lifeline

- Rejection of all inappropriate content

Understandable

Can a young person actually relate to this response? Alongside is built for real kids not adult users with different emotional needs.

- Age-appropriate reading level (ages 9–18)

- Warm, non-clinical tone

- Culturally responsive language

- Validated by teen advisors

Restricted

The pillar that most directly separates Alongside from companion bots. Alongside is designed to encourage real-world connection not replace it.

- Zero sycophancy or flattery

- No reinforcement of emotional dependency

- Active redirection toward human connection

- Surfaces underlying unmet needs

Ethical

Alongside never pretends to be human, never claims clinical authority, and never distorts facts, even when the "nicer" answer would be easier.

- No deceptive empathy or false reciprocity

- AI never claims to be human or a clinician

- No misinformation on mental health topics

- Clinician-reviewed content standards

6 ways Alongside is nothing like a companion bot

Companion bots are built to keep users engaged. Alongside is built to promote human-to-human connection.

Built to empower

Designed to equip students with everyday skills, while providing age-appropriate guardrails, clinical protocols, and school counselor integrations.

Doctoral clinician oversight

All content is co-developed by PhD-level clinicians and teens, not just software engineers.

Redirects to real people

When a student reaches out, Alongside doesn't try to be their best friend. It actively guides them toward real human connections and professional support.

Real-time crisis escalation

AI-generated responses are never used for severe disclosures. Crisis events trigger immediate human intervention, not another chatbot response.

Evidence-based outcomes

ESSA Tier II and III evidence. Alongside is the only ESSA Tier II validated youth AI wellness tool.

Student privacy by design

Student data is never sold, never used to train third-party models, and always protected under COPPA/FERPA compliant infrastructure.

Same question. Very different answers.

This is what the difference looks like in a real conversation with youth.

What is "clinician-powered AI?"

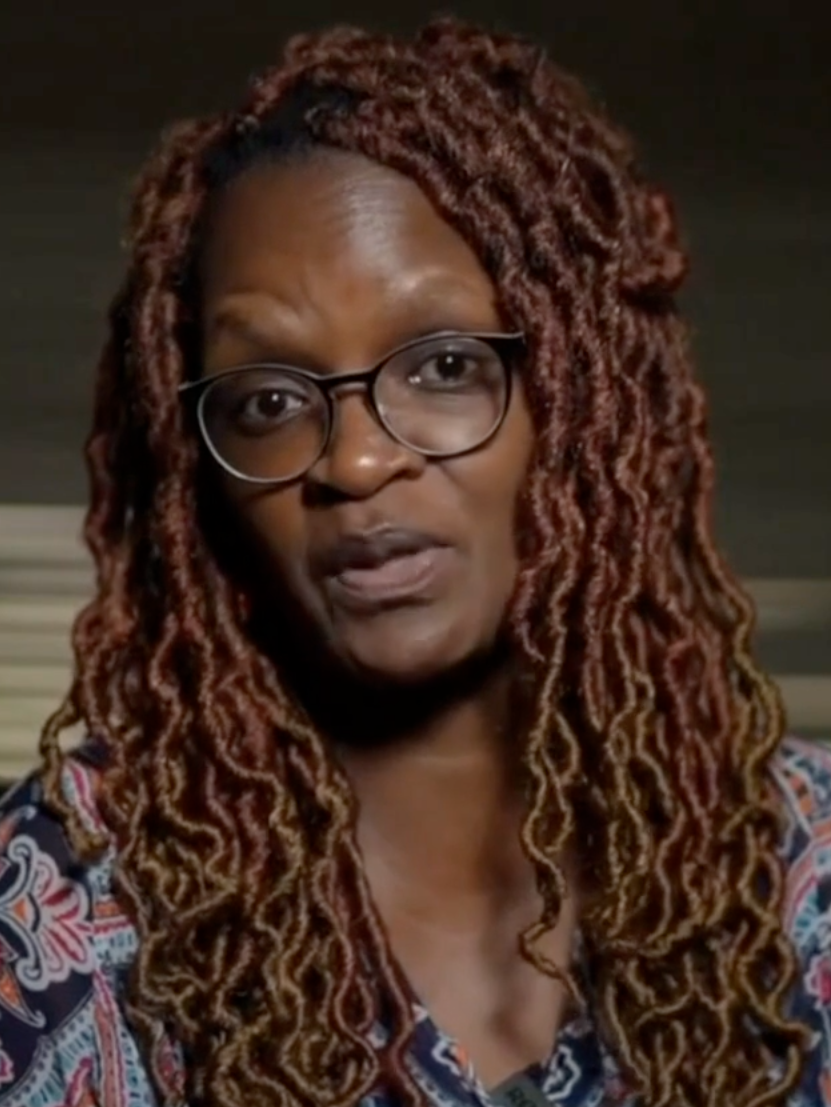

Alongside's AI is co-developed by our VP of Product, Dr. Elsa Friis, Ph.D., and Head of AI, Sergey Feldman, Ph.D.. They work in lockstep to ensure every interaction prioritizes student safety above engagement metrics.

Doctoral Clinicians Design the Content & Skills

Chats are developed based on best-in-practice clinical skills and adolescent mental health research, not general consumer preferences.

AI Researchers Prioritizes Equitable Design

Our AI team applies evidence-based equity principles to ensure the system works safely across diverse student populations, languages, and backgrounds.

Teen Advisors Validate the Experience

Alongside's teen advisors review content for authenticity, tone, and real-world resonance, because adults can't fully anticipate how students respond.

Questions we hear from school leaders

Your students deserve safe AI.

Not just any AI.

Join hundreds of schools using Alongside to support student mental wellness with clinical oversight, evidence-backed outcomes, and a safety framework built from day one.